There was a time when artificial intelligence in music seemed destined to remain in the background. Today, that background has moved forward.

The question is no longer whether these systems are capable of generating believable sounds, but how long we will still be able to distinguish a convincing result from a real musical intention.

From distrust to curiosity

For a long time, I've been somewhat distant from artificial intelligence applied to composition. Not out of ideological rejection, but out of professional habit. I was interested in what it could do well without intruding on the creative terrain: clean up audio files, reduce noise, speed up technical interventions which usually require time and patience.

Then came the first listening sessions, shared by colleagues and friends. Complete songs, synthetic vocals, ready-made arrangements. I approached them carefully, but also with that skepticism that comes naturally when working with sound every day. I searched for the point where the mechanism was breaking down.

In most cases, that point came early. The harmonic progression was too rigid, the distribution of parts unnatural, the sound correct but lacking depth. There was precision, but not yet a true musical idea capable of sustaining listening.

When you feel a choice

At a certain point, however, I stopped on a file differently than the others. Not because it was perfect, nor because it represented a dramatic technological leap. It struck me for a simpler reason: I sensed a choice there.

It was a pop song, nothing particularly complex. But the timbre of the voice didn't seem like the random output of a generator. It had an internal coherence, a thoughtful color, a consistency that changed throughout the range without becoming caricatural. For the first time, I wasn't just listening to a convincing technical achievement. I was listening to a decision.

At that moment, the discussion stopped feeling theoretical. I realized that the issue wasn't just what the algorithm was capable of doing, but who was driving it. When there's a competent ear behind it, artificial intelligence stops being a technical curiosity and becomes an extension of the creative process.

Direct evidence

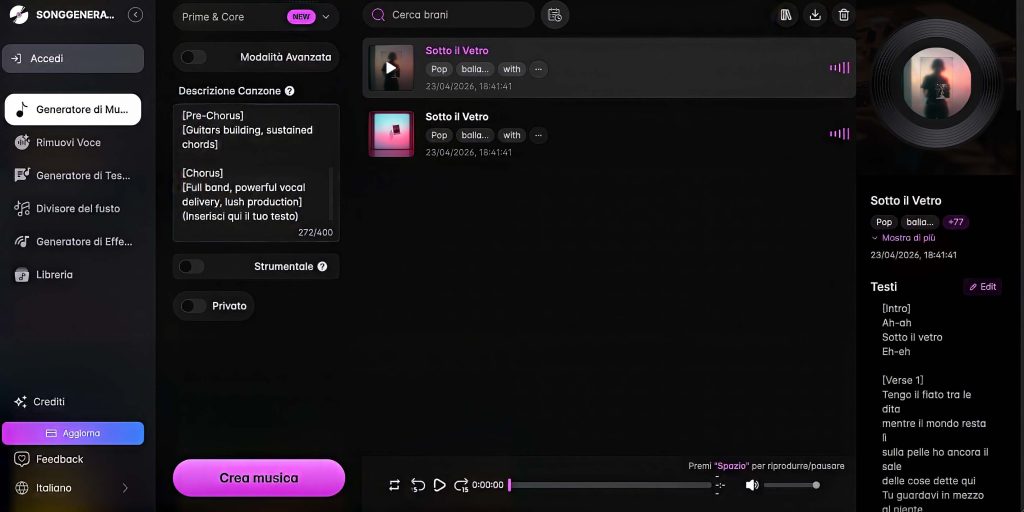

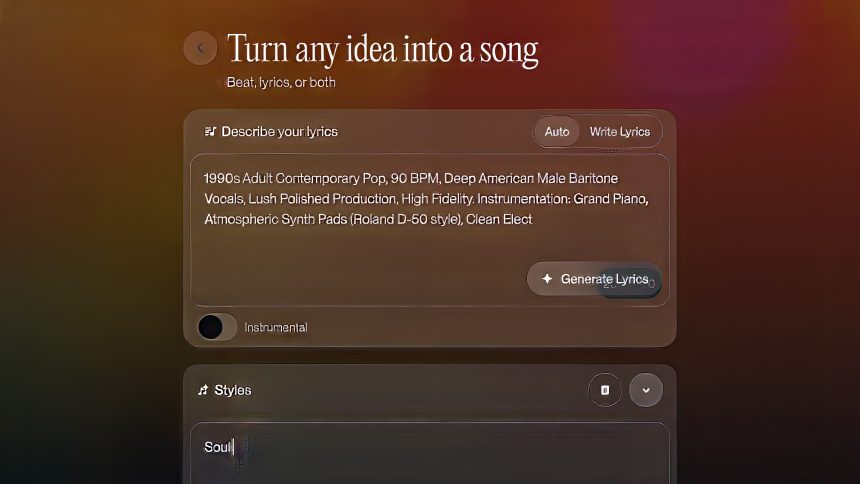

That's why I decided to try it myself. Not with the idea of delegating the music writing to a platform, but to better understand where the medium ends and human intervention begins.

I worked mostly on pop songs, because at this time, it's one of the areas where these systems can deliver the most credible results. The structure is more controllable, the number of elements in play is smaller, and therefore the margin for error is less evident. In some cases, the result remained weak, in others surprisingly usable.

With the orchestral, however, limitations emerged almost immediately. Not so much a question of quantity, but of internal organization. There were too many elements taking up space at the same time, little hierarchy, little breathing space between the parts. At first listen, it might seem rich; on closer inspection, it often sounded saturated and static.

It's a difference that anyone who works with the arrangement perceives immediately.royal orchestra It's not just a collection of tools: it's balance, movement, a continuous relationship between solids, voids, and dynamics. And it's precisely here, at least for now, that many automated systems show their limitations.

Where human intervention makes the difference

The most interesting part comes after generation. When the material can be exported to separate tracks and reworked in the studio, the result changes dramatically. Mixing, dynamic processing, timbral adjustments, analog outboards: in the right hands, it takes very little to make many of the rigidities typical of artificial production less noticeable.

This doesn't mean that artificial intelligence works alone. Rather, it means the opposite. The more credible the final result seems, the more often someone knows where to put their hands. Anyone who imagines that simply writing a generic request will yield truly convincing music underestimates everything that happens afterward: selection, correction, polishing, critical listening.

And this is where things get more interesting. Quality doesn't depend solely on the software, but on the skill of those who use it. A system can generate material, but it still can't truly replace the sensitivity that recognizes when a note is formally correct but musically out of place.

The problem of contemporary listening

There's one aspect that, personally, I find even more significant than technical evolution: the way we listen. Today, much of our music is played through devices and platforms that reduce differences, compress dynamics, and flatten out a significant portion of nuance. This changes our relationship with sound more than it seems.

Details like the distance between instruments, small timbral variations, the way a voice moves through the mix becomes less perceptible. And when these details are lost, it becomes harder to distinguish what is simply well-crafted from what is truly alive.

Perhaps this is where the most delicate aspect of the matter lies. Not only in the ability of machines to better imitate human music, but in our ability to continue listening deeply. If that level of attention declines, even the difference between a generated piece and one born from a real musical gesture risks becoming less evident.

Conclusion

After much experimentation, listening, and comparison, the feeling I'm left with isn't one of alarm, but of redefinition. Artificial intelligence can already produce surprising material and, in some contexts, become an extremely effective tool. But music, at least for now, continues to reveal itself elsewhere: in the nuances that don't ask to be immediately noticed, in the small gaps, in the internal breathing of sound.

Rather than asking ourselves whether the author will remain human, we should understand whether we will be able to remain human in listening.

See you next time on the world of AI

I completely understand the point. It's fascinating how AI is radically changing the way we perceive music, and the distinction between artificial creation and artistic intention is becoming increasingly blurred.

I spend my days between clinical and musical listening, and what you wrote struck me because it doesn't smack of theory. It smacks of real stuff, the kind you recognize immediately.

Reading you, I had the feeling I was speaking to someone who was truly listening, not just analyzing. And that's not a given these days.

The point about "choice" is what struck me the most. That's where everything changes. As long as you feel a result, you can judge it: right, wrong, credible, or fake. But when you perceive a choice, you stop! Even if it's imperfect, you understand that someone has decided something there. And that gesture has meaning.

AI today can do many things well, but it lacks that internal direction. It needs someone who knows where to go. Always.

I also found a lot of connection with what you said about the orchestral sound. That somewhat artificial richness, full but firm. It might be impressive at first, but after a few seconds you feel like you're running out of air, out of breath. And you don't add that stuff with an algorithm: you build it with experience, with mistakes, with your ear.

But you hit the nail on the head at the end. The real game is about listening. If we lose attention to detail and everything becomes fast consumption, then the difference becomes smaller. But not because AI has become "human": it's that we risk listening less like humans.

The risk is not that machines become too good, it's that we become less demanding.

The beauty of your piece is that you're neither "for" nor "against." You're inside the thing, with the tools to understand it. Keep writing, because when you talk about music with this mindset, it's always worth stopping and reading.

Nicholas Tancredi

Sound recording studio